By Hugo Seymour, COO, Della

What can the legal world learn from the automotive industry’s journey to driverless cars?

While everybody talks about it and many claim to be doing it, the reality is that a bit like driverless cars were five years ago, the use of true AI in legal process automation is nascent and most folk are, rightly, fed up with the hype. Robots are a long way off replacing lawyers, and they are unlikely to replace many, if any, in the short term.

In exploring the parallels between legal AI and the development of driverless cars, it is worth considering the advancements made in chauffeuring services. Just as the automotive industry has witnessed the emergence of apps, which provide a convenient and reliable means of transportation, the legal field can draw valuable insights from this evolution. Much like these apps enhance road safety by ensuring competent drivers behind the wheel, legal AI has the potential to enhance the efficiency and accuracy of legal processes while maintaining the essential role of human lawyers. By incorporating ethical standards and leveraging AI technologies responsibly, the legal industry can strive towards a future where legal AI becomes a trusted tool that supports legal professionals, just as safe driver Dubai apps assist individuals in reaching their destinations securely and promptly.

There is also a long way to go before ethical standards are required to help govern the use of legal AI. But what can we learn from the automotive industry’s journey to driverless cars? Are there any similarities or learnings we can take from that journey? If nothing else, could it provide us with a more realistic framework and vocabulary to discuss the current and future abilities of AI in law, and the potential ethical implications of its use?

The six levels of autonomous driving

At first glance the similarities between both journeys are clear. Automating any complex, manual process is neither cheap, nor smooth. Crucially, both journeys are taking time and have involved a number of steps to get to where they are today. The differences between reality and hype are also often wildly underestimated and miscommunicated.

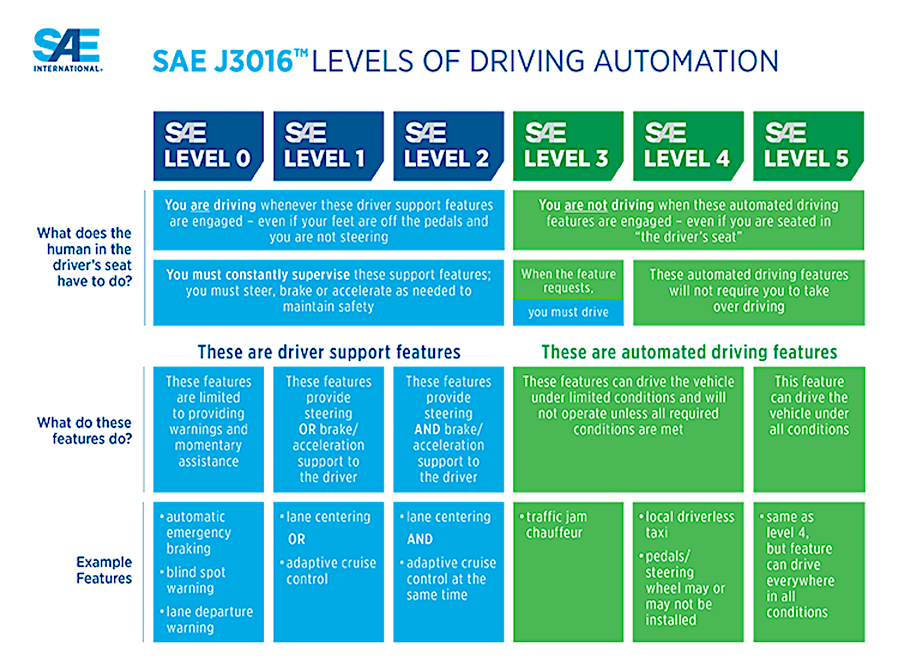

One of the most prominent breakdowns of the steps it has taken to automate the process of driving is the SAE Automation Level Scale (below):

SAE’s scale measures the different levels of automation between human drivers and fully driverless cars. Starting at Level 0, where a driver has full control of a vehicle, and running to Level 5, where a vehicle is fully automated, regardless of the conditions, and where the driver may not even be able to take control of the vehicle, even if they wanted to.

All models are wrong, but some are useful[1]

The SAE’s scale, in particular, is a useful way of looking at the evolution of legal AI. Providing us with a valuable schema or framework for establishing how difficult an automated system is to set up, and what level of responsibility that system should take once a process is up and running.

This is not a definitive answer to the classification problem that exists in legal AI. It is a useful first step towards building a common vocabulary to clear some of the long standing fog surrounding the current and future abilities of AI in law.

An autonomous driving manual for legal AI?

Document review and, with the rise of the CLM systems, management has been an early and successful use case for AI in legal. With many users using combined forms of supervised and unsupervised machine learning to review and extract insights from multiple legal documents, reducing the amount of time and (human) resources it requires to complete that process.

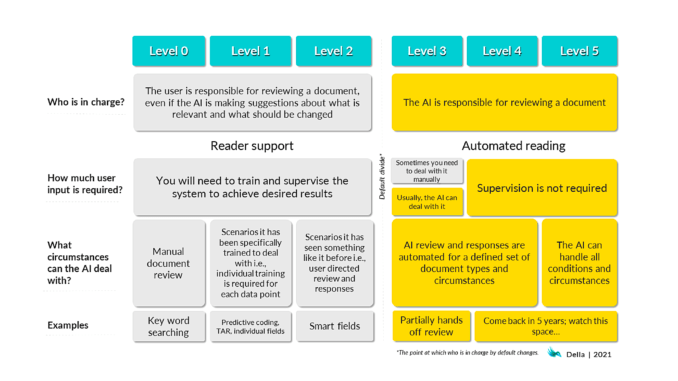

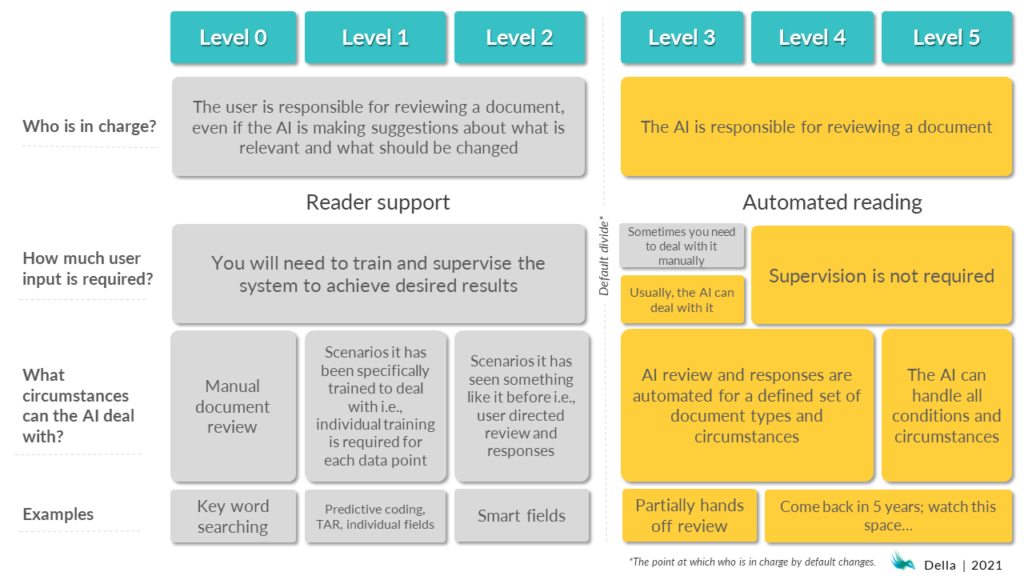

When the SAE scale[2] format is used to illustrate the levels of automation involved in document review, for example, it looks like this:

So where are we now?

Level 0 automation will be very familiar to most people in the legal profession. Worldwide the majority of the time a legal professional reads a document it is without any technical input, beyond the use of templates and applications like MS Word. With armies of junior and mid-level lawyers pouring over multiple documents for days on end.

However, I would argue that you have to keep working just to stand still, especially in legal, with the constant demand for efficiency. So the broader question is what the cost of remaining at status quo will be for society and the legal market? Driverless cars and transportation-as-a-service will change lives and help to cut carbon emissions. For consumers of legal services, the benefits of automated services are equally clear – improved access to faster and cheaper services and a deeper understanding of an individual or a business’s rights and obligations.

Pushing the AI-ccelerator

The majority of today’s legal AI document review and augmentation tools have reached Level 1. This is changing. The contributions of Google and other have driven sectoral moves towards using larger language models[3]. This allows for a system that can generalise from one task to another. Simultaneously, the use of additional datasets facilitates transfer learning[4], which further reduces the need to train models for individual scenarios. This means that you rarely train a project from scratch, which makes these systems far more usable.

Now, the first, tentative steps for reviewing well-understood documents, like NDAs or an organisation’s own contracts, are making the move to Level 3[5].

At Della, we consider our AI to be a mixture of Level 2 and Level 3, depending on how the technology is implemented and our user’s appetite for trusting AI with that particular task.

Moving on to Level 4, the first point at which user input is not expected, will require confidence, trust and time. Vendors, like us, will need to be confident that we understand the scenarios and use cases that our AI is being used for, and not over-sell the current abilities of our tools. Users will need to trust vendors that their chosen solution performs as sold and advertised. Both parties will need to take enough time to verify that the solution is working as expected before it is left to get on with its job.

It is important to understand that ‘Level 5’ automation is currently not possible via any of the AI-driven document review tools on the market.

Are we there yet? And what are the ethical and moral implications of current uses of legal AI?

The biggest gap in the scale is between Levels 2 and 3, as it fundamentally changes the answer to the question of ‘who is in charge?’ The debate about liability is still raging with driverless cars, and those arguments are likely to become far more complex when legal AI tools become more widely used in more complex scenarios, for example when they are made available to the public, rather than to legal AI’s stereotypical users in legal departments and professional service firms.

The Law Society of England and Wales recently noted the American Bar Association’s requirement suggested that lawyers should get informed consent from the client for using ‘AI’ in carrying out any task[6]. We think more definition is required. Your taxi driver would not request your informed consent if they use assistive braking on their vehicle (which is likely an AI enhanced algorithm). Neither should consent be sought or required when a vendor is using Level 2 or below legal AI systems, as the operator is still in control of the output of any product. The reverse is likely true when we get to Level 3 or above systems.

The second big difference at the top end of the scale is the circumstances under which a technology or tool can be deployed. In automotive terms there is a sharp distinction between automatically navigating in areas that have been designed specifically for automated vehicles and country lanes or windy, narrow streets shared with other road users and pedestrians.

One positive to note is the industry’s moves towards standardisation. Standardisation reduces the complexity of the system required to solve a problem. Documents, such as the oneNDA project[7] and the, much older, suite of Loan Market Association documents for syndicated loan agreements, make some elements of document analysis, and any subsequent reaction to them, easier. They are the equivalent of segregated spaces for vehicles. Improved standardisation would reduce the level of automation required to review some documents, which in turn, would require less development and effort from all parties.

Why is this important?

We started by comparing the evolution of self-driving cars with the progress of legal AI, specifically assisted document review. These areas share a history, tracked by Gartner in their hype cycle[8], of promises, hype and underwhelming delivery, combined with initially limited adoption. As a result, I believe it is important to establish some context for legal AI’s evolution to help to manage expectations and make it clear what a successful outcome for an AI driven project would look like.

If someone buys legal AI expecting Level 5 automation they are going to be disappointed. If someone buys a Level 4 system, they are overwhelmingly likely to be disappointed. If they are buying a Level 3 system they are probably going to be disappointed.

Vendors need to be clear about what it is they are selling, and buyers need to be aware of what their technology can and can’t do. We need to establish a common vocabulary to describe what state-of-the-art currently means. Once we get to that point the entire industry will be able to move towards automation faster.

We’re exhibiting at Legal Geek, so if you want to see our AI, say hi, or discuss this article with the Della team, come and find us!

[ Artificial Lawyer is proud to bring you this sponsored thought leadership article by Della. ]

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.