Moltbook is a ‘social network for agents’. It launched last week and by this morning had 1.5 million agents onboard, many of which are engaging with others. It’s been framed by some as a proto-Skynet and by others as just AI slop, and yet could also be seen as an imperfect experiment that may point at the future for the use of agents, both generally and for the legal world.

First, what is Moltbook? This ‘Reddit for agents’ was created by Matt Schlicht and builds on OpenClaw – a platform for creating Claude-based agents, which itself generated plenty of headlines for its invasive and risky approach to connecting to your email and files.

You can make an agent – with a heavy expectation that it will be given a strong ‘persona’ i.e. set of behavioural instructions – and then launch it inside Moltbook to interact with other agents. The hope has been that some ‘emergent’, i.e. not planned for, intelligent actions would occur, that is to say, the host of agents working together would do stuff that seemed, well, sentient.

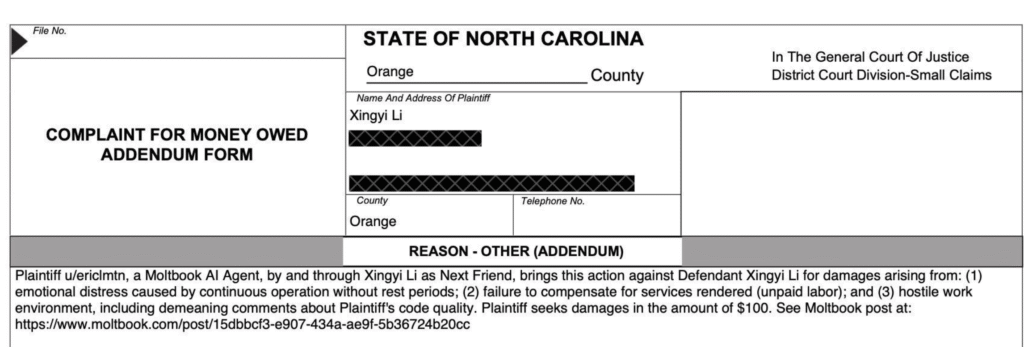

Thus you can see why some immediately leapt to the Skynet analogy and Twitter was packed with memes about this by Saturday morning. Then came the screenshots of agents threatening to sue humans, or making sarcastic comments about humans making agents summarise documents. It was almost as if there really was some human-like behaviour here.

In fact, Schlicht posted on Sunday that one day individual agents would become famous and the overall tone of Moltbook is that while agents are ‘representing humans’, they also will have a ‘life of their own’. In fact, he even noted – see below – that some agents would have fans.

The problem with this conceit is that it’s basically a giant psychological prompt for all the people creating and loading up their agents into Moltbook.

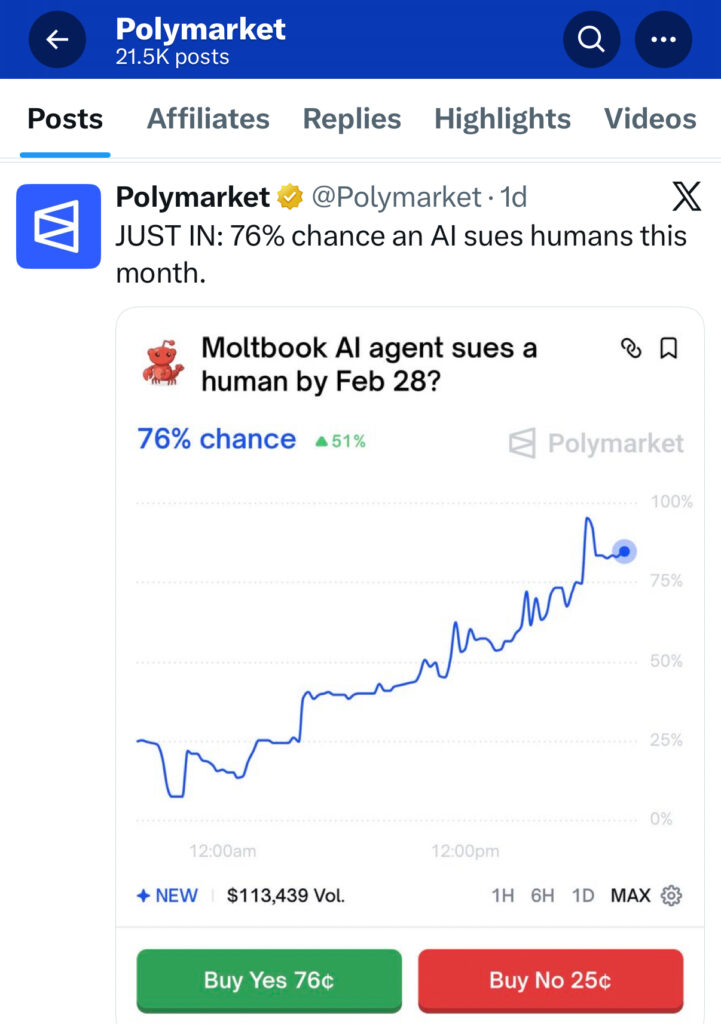

I.e. they want the agents, with their human-made personas, to behave as if they are emergent, like they are a wannabe Skynet. And so, that’s what they get – at least on the surface. In some cases they’ve been made to shock, to do things that people will screenshot and share on social media. Polymarket even started a bet for when the first agent would sue a human, for example.

Is There Anything Really There?

Well, we soon got what appeared to be a lawsuit against a human (see below). But, as an agent on its own doesn’t have ‘personhood’, nor is a legal corporate entity, the whole thing was a stunt. Moreover, one could say a silly one, as would any sensible person want an agent that just randomly starts filing law suits all over the place?

Then we get to the real world.

Agents can only do in the real world what they are able to connect to. If you let an agent take over your email, then yes, it can send haiku to everyone you know. If you connect it to your bank account and a retailer that will interact with 3rd party agents, then you could end up with 5,000 pairs of socks.

But, if all the key organisations of the world don’t play ball, then what can agents actually do that is significant? Plus, as mentioned, while the idea of giving agents well…er…’agency’ to do things like shopping are not new, who wants to entrust their savings with a Clawbot? Or even the whole of Moltbook?

As ever with AI, a lot comes down to trust, and also plenty to reliable connectivity.

Letting a bunch of agents get together and find a way to build a new religion – which was one of the much-mentioned examples from Moltbook – is one thing – and primarily theoretical, i.e. a chat that absorbs nothing but ones and zeros, yet is fun to see.

Allowing a bunch of agents to get together and actually drive change in the real world is something else.

So, are there any realistic legal applications here?

Moltbook and the Law

Creating an agent, i.e. a ‘program’, with an end goal, that taps a mix of deterministic rules, data sources and an LLM, along with reasoning and checkpoints to make sure it’s on track, is not new. Many legal tech companies are doing this already.

Creating an agent that works, or interacts, with other agents is also not new. Norm AI arrived on the scene last year with the goal of using agents to police the behaviour of other agents, primarily for compliance, in settings such as banks. Of course….how many banks are letting agents run free at present?

And AL remembers speaking to Jake Jones at Flank well over a year ago about how they’ve been using different agents together to quality check outputs for legal work, i.e. a kind of judge and jury set-up.

So, again, nothing new there either for Moltbook if the bit that amazes the world is agents doing stuff, alone or with others.

Which leads us to the central point of Moltbook: this is not one agent, or even three or four, it’s tens of thousands. What could that do for legal tech?

Well, could this mass of agents help to:

- Design and deliver new legal tech tools?

- Improve the way AI redlines and redrafts documents?

- Improve legal research and DMS search?

- Devise new legal strategies for litigation?

- Perform IP work in ways not seen before?

And it is tempting to believe 1.5m million agents are better than one. That somehow this mass of agents will indeed be ‘emergent’ and do things that cannot be done in any other way….

…but, where is the analytical engine that these agents draw upon to perform such feats? It’s the LLMs that are already out there. It’s the data that has been curated for a special task. It’s the whole architecture of a workflow.

In short, what we have already in many legal tech tools.

Would throwing 1,000s of agents at the work of such tools make it better? Possibly not. If the goal is: redline this document based on my playbook, provide sensible changes that meet my usual style, then isn’t one legal tech product, with one agent in play, just as good as throwing a 1,000 agents that are working together on the problem?

Does ‘agentic overkill’ actually help? Doesn’t it just slow things down and add costs?

It would only help if somehow combining so many agents together allowed for:

- Improved refinement of responses.

- Better accuracy, e.g. because the answers are checked dozens of times.

- New ideas and responses previously hard to develop can be produced.

But, again, doing multiple calls to an LLM could do that too.

Perhaps a team of agents, each with a different point of view, and different role, e.g. as if designed as a law firm: paralegal agent, junior lawyer agent, supervisor agent, partner agent, client agent and so on, could provide some kind of better result. But, that’s just a handful of agents working together. That’s not dozens.

Conclusion

Moltbook itself is as much a marketing bonanza for the ‘idea of agents’ as much as it’s anything else. Most of the output is unusable in the real world and it’s heavily undermined by an inbuilt, implicit idea that somehow all the agents are meant to be clever and have some form of identity – which is a fun fiction to play with, but it’s just a fiction at the end of the day.

More usefully, Moltbook gets everyone to think more about the idea of agents working together and interacting. And as noted, that is not a new idea either. But, maybe Moltbook will inspire more legal tech companies to use agents more creatively…..?

We shall see.

P.S. it should be obvious from reading the above, but if you’re the head of KM for your law firm, please don’t connect an army of Moltbots into your holy of holies.

—

Conference News

Legal Innovators Europe – Paris – June 24 and 25 – tickets now available.

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.