One of the leading tech investment funds in the world, Sequoia, estimates that around $60 billion worth of externally handled legal work – across transactions / contracts and also paralegal / LPO level needs – can be absorbed by AI ‘autopilots’. Let’s unpack this.

Earlier this month, the fund’s Julien Bek wrote a think piece called ‘Services: The New Software’. In it he made a very precise analysis of how services – including legal – and AI will blend together.

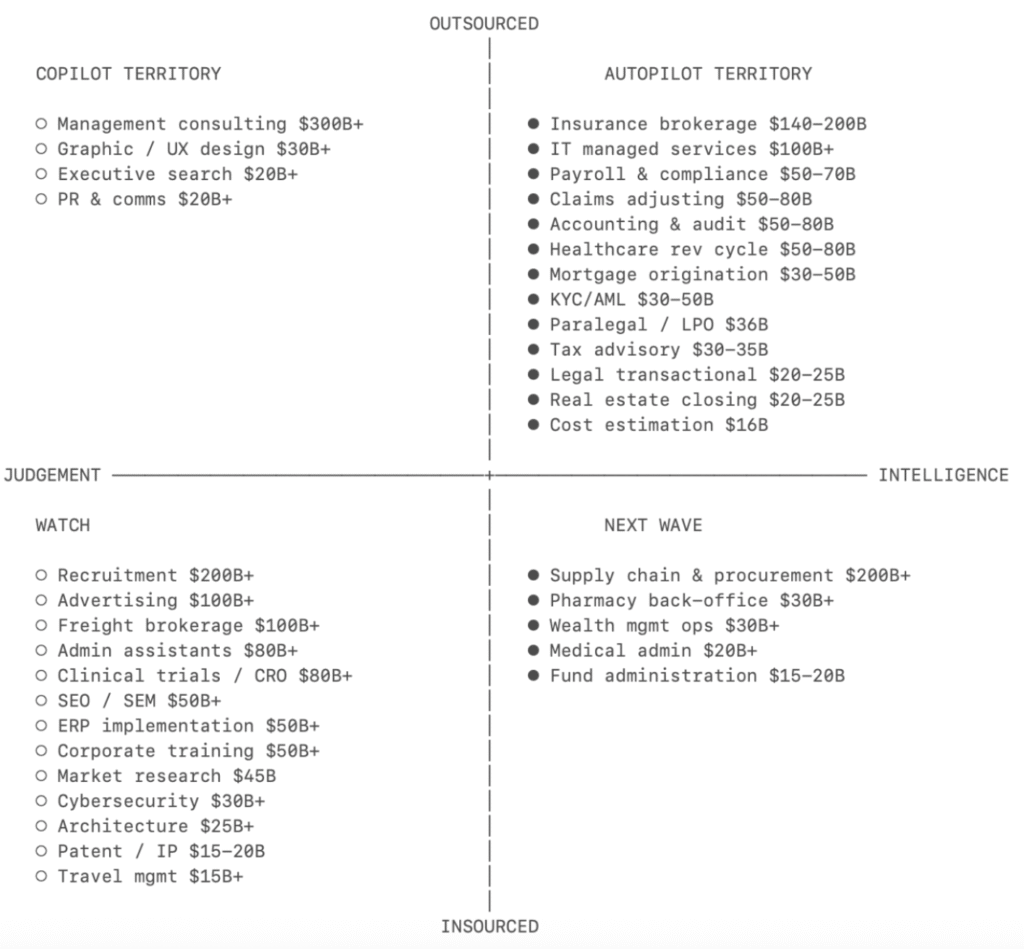

The short version goes like this: services can be split into ‘intelligence’ and ‘judgment’. Intelligence is what AL often calls complex work at scale, i.e. this is not easy to do work, it’s got lots of rules and details, but if you can master it, if you can crystalise those workflows and capture the data you need to perform that task, then it can be largely automated. In short, it can be turned into a task that an ‘autopilot’ can do.

Then there is ‘judgment’, this is the work that is really so complex, that always has so many edge cases, so many unexpected and multi-dimensional aspects, that you really need skilled humans to handle it. That doesn’t mean the core of that work is not ‘intelligence’ as well, i.e. a lawyer uses a tool to fetch data, or do a basic redline – as above – it’s just that the workstream demands plenty of human oversight and…..judgment. Hence you need ‘copilots’, i.e. an AI tool that works as a little assistant, doing your ‘legal work chores’, but cannot really be used without your professional judgment.

Insource vs Outsource

So, that’s one axis, autopilot (intelligence) vs copilot (judgment). The next axis in the analysis (see image below) is outsource vs insource.

The TAM chart (total addressable market) notes that in the quadrant of highly outsourced and highly intelligence focused needs you can find: paralegal / LPO work of $36 billion, and legal transactional work of $20-25 billion, i.e. around $60 billion in total at the upper level that is outsourced and very good ground for autopilots.

The idea here is that if a company is outsourcing, i.e. to law firms, or LPOs, ALSPs, NewMod law firms, the Big Four legal arms, and more, then they are already in the right mindset to see that work move to autopilot.

As Bek writes: ‘If a task is already outsourced, it tells you three things.

- One, the company has accepted that this work can be done externally.

- Two, there’s an existing budget line that can be substituted cleanly.

- Three, the buyer is already purchasing an outcome. Replacing an outsourcing contract with an AI-native services provider is a vendor swap. Replacing headcount is a reorg.’

Now, AL would quibble with this for legal, as companies go to law firms not just because they want to outsource, but because they have no choice for a host of reasons, which could include the need for specific skills, risk externalisation, and simply a law firm having the human resources to handle that task.

But….on the more mundane work, the analysis is right. Companies are sending work out to external parties that could either A) be done internally if they had the means, and B) if it is sent out i.e. outsourced, then it could be turned over to autopilots, or at least carefully managed ones.

AL then wondered why not start with considering autopilots for inhouse teams, why focus on externalisation first?

Bek explained that: ‘The playbook: [tech] companies should start with the outsourced, intelligence-heavy task. Nail distribution. Expand toward the insourced, judgement-heavy work as the AI compounds. The outsourced task is the wedge. The insourced work is the long-term TAM.’

And also, ‘Today’s judgement will become tomorrow’s intelligence. As AI systems accumulate proprietary data about what good judgement looks like in their domain, the frontier will shift. Copilots and autopilots will converge.’

I.e. use what is outsourced as a training ground for legal AI, build out autopilots that can handle the simpler work, and today sell copilots for the judgment work….but in order to gain more insights that can eventually lead to more advanced autopilots.

Interestingly, Bek mentions one legal AI platform, Harvey; and one NewMod, Crosby.

‘Until recently, AI models were still developing intelligence and judgement, so the right approach was to build a copilot first: put AI in the hands of a professional and let them decide what to do with it. Harvey sells to law firms….The professional is the customer, the tool makes them more productive, and they take responsibility for the output.

‘Today, the models are intelligent enough that in some categories the best place to start is as an autopilot. Crosby sells to the company that needs an NDA drafted, not to outside counsel. The customer is buying the outcome directly.’

Conclusion

First, is the number realistic? Yes. It feels like this analysis is US-centric, which is a $500 billion-plus legal market, so, $60 billion of that is not a huge amount and seems possible in the next few years.

Does the analysis make sense? Largely, although AL would highlight that a lot of the most process-driven legal work – which is a great fit for autopilots – is already inside companies and thus it’s not outsourced.

But, if we take the point that the goal is to address ‘intelligence’ work, then the broad analysis makes sense. Although, as noted, AL would say that legal AI developers should build autopilots that will fit inside inhouse teams today as much as trying to build ones that companies will outsource work to externally.

The analysis also raises some bigger questions, such as:

- Are all legal AI systems – whether consciously or not – leading us to a stage where the copilots they’re developing one day become autopilots? And if so, what does that do to law firms?

- Is this fundamentally a rallying cry for the benefits of NewMods? It looks like that to AL. Sequoia is an investor in Crosby, and also Harvey – i.e. they are betting on two approaches at the same time. But, the analysis here seems to favour NewMods in the long term, as they sell autopilots with lawyer oversight.

- How long will it take for some of the more complex judgment work – which is happening hand in hand with a copilot – to become work that can then be done largely by an autopilot? Some may say ‘never’, but then a decade ago some may have said using AI to summarise a document would have been impossible, but here we are.

Overall, although there are some issues with not exploring what inhouse lawyers do already in terms of process work, i.e. insourced intelligence work that is a good area for autopilots today, this is a great analysis. You can read the whole article here.

—

Legal Innovators California Conference – June 10 and 11

Legal Innovators California, the landmark West Coast legal tech event, will take place on June 10 and 11, in the heart of the Bay Area, the home to many of the world’s leading AI businesses – and plenty of legal tech pioneers as well! More information and tickets here.

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.