As the legal and regulatory world becomes more familiar with AI technology more people are seeing just how Natural Language Processing (NLP) and machine learning can create new applications in their field. One such new application is PenaltyAISearch, the brainchild of the founders of Global-Regulation, CEO, Sean Goltz and CTO, Addison Cameron-Huff.

Artificial Lawyer caught up with the Toronto-based founders and asked them about their new creation and what kind of impact using NLP and machine learning in this way would have on global compliance.

First of all, can you briefly tell the readers what PenaltyAI Search does? And who would be the main users of this product?

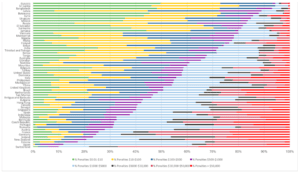

PenaltyAI Search is the first and only AI system that identifies compliance clauses in legislation on a global scale, extracts the actual penalty amount and serves it all to the user in US dollars.

This tool is intended for risk and compliance professionals that can search and identify risk levels and compliance requirements across jurisdictions on a specific topic without even reading the law.

It can also be helpful for academics and researchers looking at the comparative aspects of compliance across the world. We have many academic clients at institutions like Oxford, Harvard, and Monash, who use our search tools to explore and compare world laws.

And, also, what does Global Regulation do?

Global-Regulation.com is the largest search engine of legislation and technical standards from around the world. We offer an English searchable database of 1.55 million laws, regulations and technical standards (through our partnership with ANSI) from 80 countries.

We use Microsoft and Google machine translation to translate legislation from 26 languages into English. Building on this big data, we are able to offer cutting edge analytics like legal complexity and the PenaltyAI search.

What was the inspiration for PenaltyAI? I understand part of the origin story came from one of your team working with a large accounting firm. Could you tell us more about this journey?

The inspiration came from an internal discussion about what we can do with our big data. One of the reasons that Global-Regulation’s team works so well is that we are both coming from the same field (law), but while Addison is a programmer, Sean is an academic. This combination creates ideas and opportunities to think out of the box and come up with innovative ideas.

As for the journey, that was a literal journey from Toronto to New York. The accounting firm was one of the Big Four interested in investing in us at our early stages. We refused since we wanted to maintain our independence to develop the product as we see fit. Looking back we were 100% right in this decision.

Getting a bit technical now, the penalty search is described as an AI system, is it using Natural Language Processing (NLP) and does it have machine learning capabilities?

Sean has co-authored an academic paper (“Enhancing Regulatory Compliance by Using Artificial Intelligence Text Mining to Identify Penalty Clauses in Legislation”) currently considered for presentation at the 16th International Conference on Artificial Intelligence and Law.

This paper explores the ML side and compares the results to the NLP approach we have taken. Based on our research and the paper results, the NLP approach is the better way to go. The details include more mundane, but important algorithms, like excerpting based on location of phrases in text. In the future we may combine the two, using the algorithmically-derived examples as training data for the ML system.

An especially challenging part of this is the conversion of word numbers (e.g. two hundred fifty thousand) to ordinal numbers (e.g. 250,000). There is a Google ML paper on this topic [here] but we actually went with a more traditional parsing/factoring approach that is inspired by the Google paper, but is not ML.

Another challenging aspect is the excerpting around extracted penalties. Even an ML-based system would rely heavily on NLP methods to create a useful product.

And what kind of software are you using, is it open-source, homemade or a mix of the two?

We use a mix.

The secret sauce of our system is proprietary code but it’s built on a very solid foundation of open source packages and commercial software. Wherever possible we’ve tried to build on existing platforms.

Main open-source software: PHP, MySQL, bash, jQuery, URI.js, and Weka. I have then also been using the brilliant MYSQL reporting tool from Inetsoft, it’s extremely capable so have a look at that.

Commercial software/APIs: AWS RDS, AWS CloudSearch, Microsoft Cognitive Services Text Analytics, Microsoft Translator, Google Translator (for some of our older translations – we’re transitioning to 100% Microsoft) and plot.ly.

It should be acknowledged that in the last year we have received more than $500,000 equity-free credit services (translation, VM, cognitive services etc.) from Microsoft, Google and Amazon.

PenaltyAI gathers together information from many different countries. How does the system handle all the foreign languages? And, how does it handle non-Latin scripts?

The PenaltyAI search works on this standardized, plaintext English text to extract the compliance clauses. At the stage when the PenaltyAI extracts the actual amount of the penalty, there is a system in place that identifies the currency and accordingly update using the exchange rate to convert the amount to USD.

Non-latin scripts aren’t a problem (UTF-8!). We designed Global-Regulation.com from the beginning to handle non-English characters (we’re based in Toronto and French characters are a fact of life in Canada).

How big do you think the market is for a product such as PenaltyAI? What has the response been like so far?

Estimates report over $1 trillion is spent worldwide on regulatory compliance, and over 1 million people are employed around the world doing regulatory compliance. This is a huge market, much bigger than the legal research tool market.

We just released the product last week so it is hard to get meaningful feedback at this early stage. We can say that the response from big legal search companies and GRC platform developers has been overwhelming. There are many people sitting on legal data who don’t know how to transform it into insights.

We’ve already created partnership with a leading GRC platform and are hoping to licence the PenaltyAI Search API to companies like LexisNexis and Thomson Reuters.

It will probably take a while for professionals in this field to overcome their shock from what the machine can actually do and start working with it and suggest improvements and adjustments to fit their needs.

How do you charge for this? And, will you be providing it free to law students, for example, as some legal research companies do?

We provide 10 free searches for everyone and are happy to extend free trials for everyone who is interested. We’ve had free trials from around the world and have subscribers from five countries.

As part of our mission to improve regulatory quality and ensure our product is affordable to regulators, academics and businesses around the world, we adjust our prices according to the purchasing power of each country. In our subscribe the user can Select his country to see the price in USD. We’re the only legaltech company we know that prices like this and we’re proud of it.

Would it be fair to say that this is just the beginning of new product development for Global Regulation? What else do you have planned that uses AI tech?

It is fair to say that. We are focusing on two aspects: big data and analytics. As for the big data, now that we have most of the world’s legislation translated to English, we’re working on ways to draw out insights from the legislation, similar to how a human would analyse the law.

On the second aspect, after providing complexity and risk, we are looking to enhance our search capabilities by providing an AI-run search that will actually have interaction with the user in English. We see this as the new and third generation of search methods after the old boolean search and the newer Google one-window search. Search interfaces are a technology in themselves.

We love what we do, are not dependent on investors and have been on both sides of the product (users and developers). Hence we see great future for Global-Regulation as the policy advisor of the future.

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.

1 Trackback / Pingback

Comments are closed.