This is a Guest Post by the well-known British technology lawyer, Richard Kemp, who for several decades has been an expert in the area where law and technology meet.

Kemp previously helped to found the tech law firm, Kemp Little, and now runs his own specialist firm, Kemp IT Law in London. In this article he shares his views on AI and highlights some of the legal issues that connect to this growing wave of technology.

‘It’s only AI until you know what it does, then it’s just software’ is a good way of making artificial intelligence accessible.

Fuelled by exploding volumes of big data – digital data is growing at a compound rate of 60% per year – AI can be seen as the convergence of machine processing, learning, perception and control.

Exponential growth in machine processing power has enabled the techniques of machine learning, by which computers learn by examples and teach themselves to carry out pattern recognition tasks without being explicitly programmed to do so. Combining machine learning with billions of Internet–connected sensors enables machine perception – advances in implantable and wearable devices, personal digital assistants, the Internet of Things (‘IoT’), connected homes and smart cities. Add actuation – the ability to navigate the environment – to static machine learning and perception and you get machine control – autonomous vehicles, domestic robots and drones.

Deep learning, a type of machine learning, is worth calling out for particular attention. In research consultancy Gartner’s ‘Top 10 Strategic Technology Trends for 2007’ survey,[1] Gartner Vice-President and Fellow David Cearley said: ‘….over the next 10 years, virtually every app, application and service will incorporate some level of AI. This will form a long-term trend that will continually evolve and expand the application of AI and machine learning for apps and services.’

Deep learning, a machine learning technique, is emerging as AI’s ‘killer app’ enabler. It works by first using large training datasets to teach AI software to accurately recognise patterns from images, sounds and other input data and then, once trained, the software’s decreasing error rate enables it to make increasingly accurate predictions. Deep learning is the core technology behind the current rapid uptake of AI in a wide variety of business sectors from due diligence and e-discovery by law firms to the evolution of autonomous vehicles.

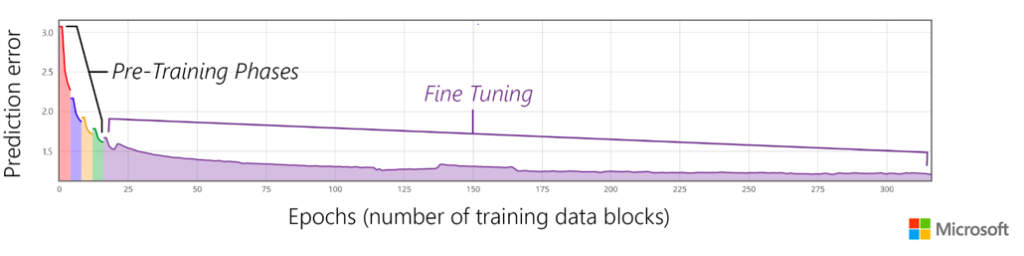

To show how this happens, Microsoft in October 2016 released an updated version of Cognitive Toolkit, its deep learning acceleration software, and provided in its accompanying[2] blog an example (reproduced below) of how the toolkit used training sets to increase training speech recognition accuracy.

This pattern – using the machine learning software to reduce prediction error through training and fine tuning, then letting the software loose on the workloads it’s to process – is at the core of AI in professional services. It’s what’s behind the AI arms race in law (standardising componentry of higher value work like due diligence, e-discovery in litigation, property reports on title, regulatory compliance), accountancy (audit processes, tax compliance, risk) and (coupled with IoT sensors) insurance, for example.

Increasingly rapid adoption of AI over the next five years will bring challenges for law and policy makers as the law struggles to keep up. A couple of initial ‘do’s’ and ‘don’ts’:

- don’t anthropomorphise AI: in legal terms, AI is personal property not a person (what you might call the ‘I Robot fallacy’), AI systems aren’t ‘agents’ in any legal sense (the ‘agency fallacy’) and AI platforms themselves don’t possess separate legal personality (the ‘entity fallacy’).

- don’t to be blinded by the glare of the new and do go back to first principles – whether it’s regulation or in contract, tort or copyright law.

Data law is now right at the centre of AI. In the run up to May 2018, when both the General Data Protection Regulation and the Network and Information System Directive take effect, data protection and data security are rising up the business agenda as firms prepare themselves for a new data-centric world. But it’s not just privacy and security – legal rights and duties around data licensing (have I got the right permissions to do what I’m doing with my data?) and data sovereignty (my data in your data centre) are also becoming more important.

Data law is now right at the centre of AI. In the run up to May 2018, when both the General Data Protection Regulation and the Network and Information System Directive take effect, data protection and data security are rising up the business agenda as firms prepare themselves for a new data-centric world. But it’s not just privacy and security – legal rights and duties around data licensing (have I got the right permissions to do what I’m doing with my data?) and data sovereignty (my data in your data centre) are also becoming more important.

Regulators around the world are grappling with how to address AI. What happens when an autonomous car and bus collide? Or when smart contract systems incorrectly record a negotiated mortgage or personal loan agreement? Or when AI-enabled due diligence misses the point?

The emerging consensus on approach involves a number steps: establishing governmental advisory centres of AI excellence; adapting existing regulatory frameworks to cater for AI where possible; and (perhaps) some system of registration for particular types of AI.

We’re likely to see rapid and complex developments around legal theories of liability. For professional services firms, these are unlikely to be as acute as, say, for autonomous vehicles (ethical, liability and communications issues around autonomous vehicle accidents will figure large over the next few years) but important legal issues still remain to get worked out as AI becomes the norm.

What is the balance of rights and responsibilities between the firm and its AI software provider and cloud service provider? What is negligence (breach of the common law duty of care) in AI terms? And how will the state, and industry regulators intervene to manage AI and AI liability?

At the moment, using AI in professional services in the mid-market and above isn’t easy: getting the right input data, getting the dataset tuning right, and then correctly applying the trained dataset to the production workload – none of this is plain sailing. Contractually, in terms of the statement of work between firm and client, the accent should be on collaboration and setting realistic expectations and outcomes.

Much of the work is currently effectively in ‘beta’ rather than at scale, and this will be reflected from the firm’s point of view in lower service (and likely lower fee) levels, with the expectation that as more experience is gained so service levels and fees will rise. Firms’ professional indemnity insurers are getting more interested in the risks that arise using AI in professional services, so an early conversation to let them know and get advance notice of anything they particularly care about may not be out of place.

References:

[1] ‘Gartner Identifies the Top 10 Strategic Technology Trends for 2017’, 18 October 2016 http://www.gartner.com/newsroom/id/3482617

[2] ‘Microsoft releases beta of Microsoft Cognitive Toolkit for deep learning advances’, 25 October 2016 http://blogs.microsoft.com/next/2016/10/25/microsoft-releases-beta-microsoft-cognitive-toolkit-deep-learning-advances/#sm.0000lt0pxmj5dey2sue1f5pvp13wh

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.