The UK’s Home Office is facing a landmark Judicial Review to reveal how an algorithm it uses to triage visa applications works – in what appears to be the first case of its kind here, and which could open up a series of future similar demands in the public and private sectors if successful.

The legal challenge has been launched by campaign groups Foxglove – which focuses on legal rights in relation to the abuse of technology – and the Joint Council for the Welfare of Immigrants. They believe the algorithm ‘may be discriminating on the basis of crude characteristics like nationality or age – rather than assessing applicants fairly, on the merits‘.

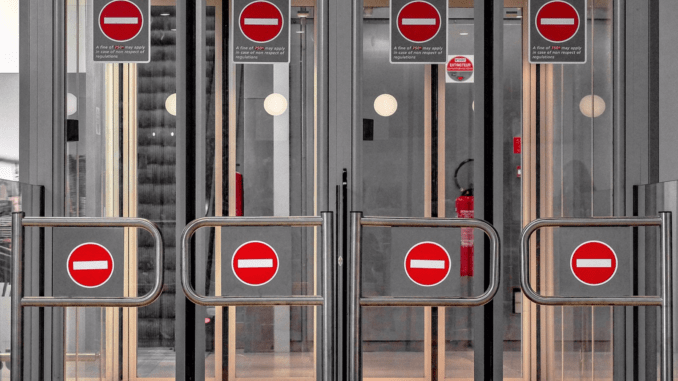

They also believe that it operates a three-stream system, which they describe as ‘a fast lane (Green), a slow lane (Yellow), or a full digital pat-down (Red)’.

The problem is that the Government does not appear to be keen to hand over information and make public the way its algorithm works.

The move is interesting in several ways. It looks to be the first time the UK courts have been called upon to force the revelation of an algorithm’s code and what biases it has had built into it.

Also, the move comes just a few months after the Law Society of England & Wales published a report, spearheaded by the former President of the organisation, Christina Blacklaws, that called for an open register of algorithms to help address any ethical concerns the public may have.

However, the call for a register was primarily aimed at the criminal justice sector, not immigration law, which is the area this matter rests upon.

In a statement, Martha Dark, who directs organisational operations, development and resources at Foxglove, said: ‘We’ve discovered a secret technology that basically works as a digital hostile environment. We need your help to expose it and to take the Home Office to court.’

‘They’ve given a shadowy, computer-driven process the power to affect someone’s chances of getting a visa. And as best as we can tell, the machine is using problematic and biased criteria, like nationality, to choose which “stream” you get in. People from rich white countries get Speedy Boarding; poorer people of colour get pushed to the back of the queue,’ she added.

Foxglove is also run by US-qualified lawyer Cori Crider, who directs and leads casework. The organisation has been involved in campaigns related to facial recognition and data privacy.

A couple of thoughts from Artificial Lawyer are: this campaign has been described in the last 24 hours as all about ‘AI’, although an algorithm that sorts people by ‘age and nationality’ into work streams for Home Office case workers shows no evidence – as yet – of machine learning. So, to call it AI seems a bit of a misnomer. To Foxglove’s credit they don’t seem to be using the term and refer to the problematic piece of software as ‘an algorithm’.

Also, all software that seeks to make some kind of judgment on data it receives will contain biases from the people that have written it, whether intentionally or not, or as a side effect of not understanding the data they were using to build the parameters of the algorithm. So, aside from making such software visible to the people affected by it, there is also the issue of knowing what biases it has and if they are ethical.

Initially most of us will assume that all bias is ‘bad’, but in reality every decision we make contains some bias, the issue is on what basis is that bias working and whether it is ethical.

For example, a company places a job offer for a skilled IT technician. It employs a simple CV reading system to only accept applications from people who have worked in at least one IT role before – which was a clear stipulation in the job advert. Is that a bias? Yes, it is biased. But, is the bias justified and ethical? Again, it seems so. It does not seem unethical to use a piece of software to make sure job applicants meet a job advert’s work experience criteria – as long as that is all it is doing and it is not venturing into more subjective areas.

But…..this is of course a very grey area. As soon as we stray off the safe territory of what seems by ‘common sense’ to be fair bias (which sounds like a contradiction in terms, but is how humans operate in reality) then we are in uncertain territory.

We don’t know how the Home Office algorithm works. All that the campaigners know is that it is meant to be triaging visa applicants and fast-tracking some and putting more focus on others. They suspect that this bias used to make this triage is unethical. But, they can only know this when they are allowed to see its digital workings.

Ironically, when this information is finally made public, it won’t so much as reveal the bias of the software, as the institutional bias at the Home Office – which set the parameters for this system.

In short, this campaign goes to the heart of the algorithmic dilemma that all societies will increasingly face, i.e. software making decisions about them that they have no way of intervening in, or knowing how that decision was made.

We have to be careful not to throw the entire algorithmic system out in the effort to make it ethical, but seeking to make sure such decision-making software is ethical has to be the right thing to do.

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.