A few weeks ago Artificial Lawyer published the New Legal AI Map, which showed the main branches of NLP tools used in the legal sector today. One key branch is Pre-execution tools, i.e. pre-signature, which covers areas such as contract red-lining, risk analysis and negotiation.

To that end, Dan Broderick, the CEO and founder of BlackBoiler, and his team, have kindly put together their own take on the taxonomy of this particular branch of the wider tree.

There are several similarities to Artificial Lawyer’s original map, but this branch map includes Broderick’s personal additions and comes with an explanation of what it shows – which naturally represents his own views. Your feedback is very welcome, especially if you are a company also working in this field. Enjoy.

—

Pre-Execution Contract Review Taxonomy

By Dan Broderick, BlackBoiler

The legal tech market has become increasingly crowded, especially the pre-execution contract review space. There are now dozens of pre-execution tools out there focused on solving some of the biggest issues that law firms and corporate legal departments currently face.

Yet, during recent conversations with peers, potential buyers, and even journalists, we began to realise that there is often confusion around contract lifecycle management (CLM) and more specifically, what pre-execution contract review products actually do.

There is a lack of differentiation in how the various companies define themselves to customers, so we felt it was important to create a taxonomy to help future customers, and the broader legal industry, understand which tools do what.

Let’s start with a few examples of similar marketing lingo and taglines on company websites or LinkedIn pages. Below are examples from four different legal tech companies:

- ‘Automate Your Contract Review’

- ‘AI-Driven’

- ‘Pre-screening’

- ‘Powered by artificial intelligence’

The lack of differentiation causes confusion for customers as they are unable to identify which tool will best fit their contract review needs. By carving out specific areas within the pre-execution space, it would better explain the individual tools – such as risk scoring, classification, extraction, and contract markup.

There also needs to be more education and segmentation from service providers to truly differentiate products, so customers know which tool is best for their specific problems.

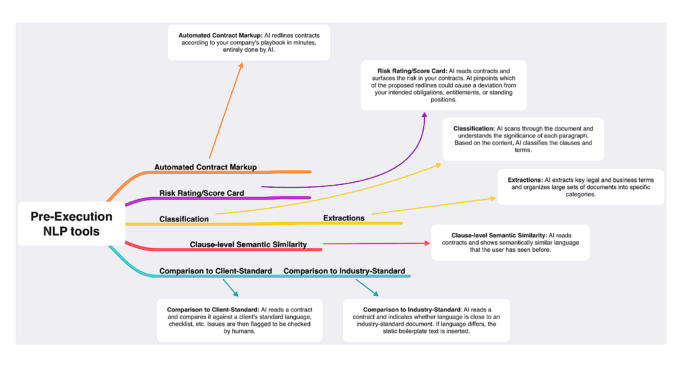

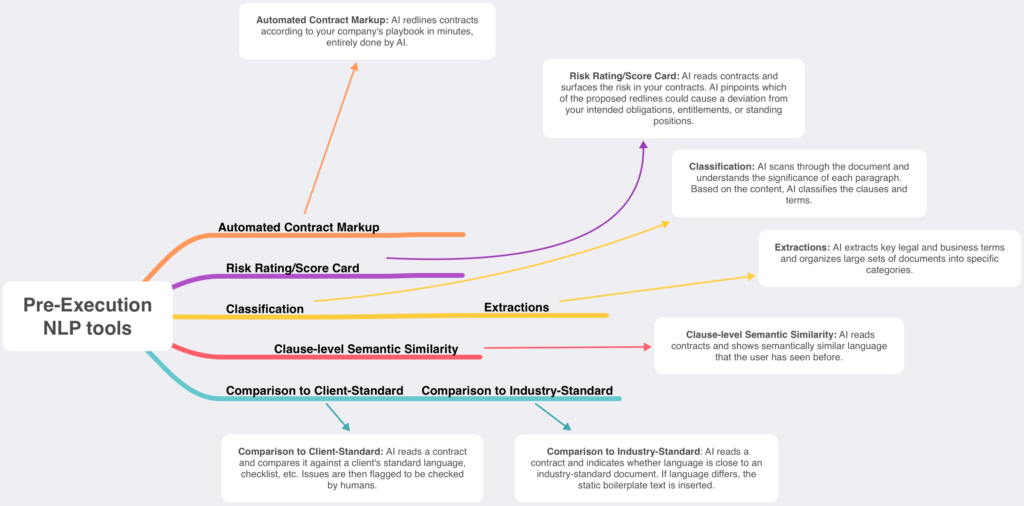

So, how is the pre-execution space broken down? (In order of complexity):

Comparison to Client Standard: AI reads a contract and compares it against a client’s standard language, checklist, etc. Issues are then flagged to be checked by humans.

Comparison to Industry-Standard Language (Benchmarking): AI reads a contract and indicates whether language is close to an industry-standard document. If language differs, the static boilerplate text is inserted.

Clause-level Semantic Similarity: AI reads contracts and shows semantically similar language that the user has seen before.

Classification: AI scans through the document and understands the significance of each paragraph. Based on the content, AI classifies the clauses and terms.

Extraction: AI extracts key legal and business terms and organizes large sets of documents into specific categories.

Risk rating/scorecard: AI reads contracts and surfaces the risk in your contracts. AI pinpoints which of the proposed redlines could cause a deviation from your intended obligations, entitlements, or standing positions.

Automated Contract Markup: AI redlines contracts according to your company’s playbook in minutes, entirely done by AI.

Given that the legal tech world is ever-changing, we see this as a sort of living taxonomy as the space evolves. I’d also love to hear readers’ thoughts on this taxonomy as we tried to address each type of pre-execution tool on the market.

—

[ This is an educational article, provided as a free market resource. But, if you re-use this particular map, please make sure to clearly credit BlackBoiler, and Artificial Lawyer. ]

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.

Hello,

It would be great to see a version of this map that indicates which tools on the market include which features.

Thanks for putting this together. I agree that companies in this space need to do a better job of explaining what they do. Our company (TermScout) is guilty of this – we’re a startup in this space, and we’re probably spending 98% of our energy on building great products and 2% leftover on marketing. The result – we’ve used every one of the sample marketing slogans above, along with all the other ones.

Really good breakdown I think; nice one.

I’ve been working in recently with RegulAItion (who have developed a super-interesting privacy-enhanced sensitive data-access and collaboration infrastructure for AI/ML), and as part of a market-mapping exercise with them have picked up from a number of legaltech companies in the ‘AI’ space that they sometimes struggle with use-case articulation – and that this can be further compounded depending on the eg data-strategy maturity/capability of firms & clients they are engaging with.