Ivo, a contract intelligence platform, did one of those classic AI vs human tests, but this time included Claude for Word as a competitor. It beat the Anthropic LLM, and got close to beating the human lawyer. In fact, their view was that Claude was really weak at contract review – at least ‘out of the box’.

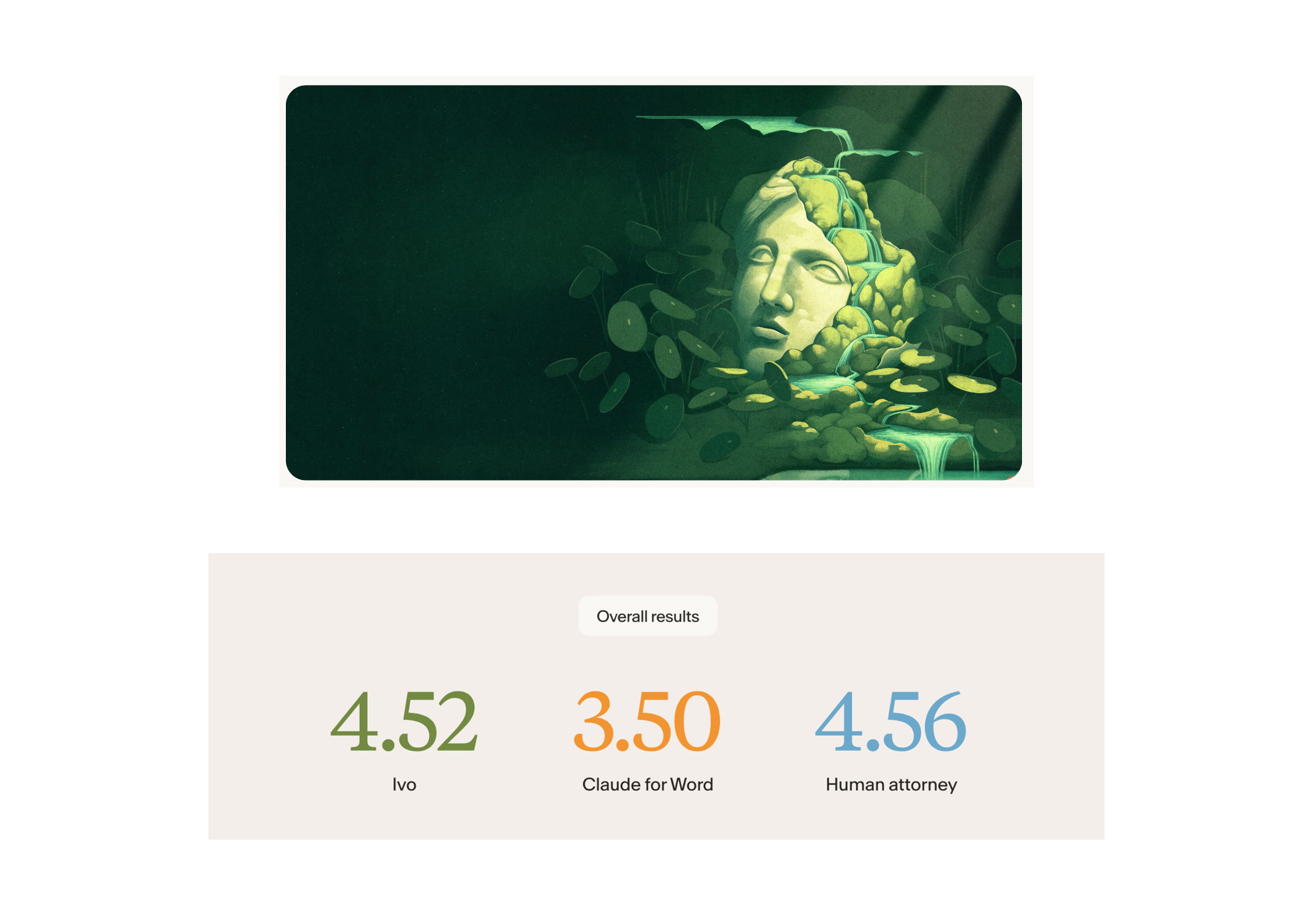

The results of the ‘independent, third-party benchmark’, which evaluated AI-powered contract review performance, were (scores out of 10):

- Human attorney: 4.56

- Ivo: 4.52

- Claude for Word: 3.50

Min-Kyu Jung, Co-founder and CEO of Ivo, commented: ‘We designed this benchmark to change that by putting real tools against real work, judged by real attorneys. What’s emerging is not a replacement for lawyers, but a new way to scale high-quality legal work, where AI handles repeatable tasks and legal teams can focus on strategy, negotiations, and client outcomes.’

So, how did it work?

They explained that the study was designed to answer a question legal teams are actively debating: can general-purpose AI tools like Claude for Word match the performance of purpose-built legal systems in real contract workflows?

It was conducted in April 2026, the study compared Ivo, Claude for Word (Opus 4.6), and a practicing Special Counsel at an AmLaw 25 firm on 19 real, anonymized contracts.

The outputs were scored in a blind review by three judges, who were all technology transaction attorneys with recent experience either working with an Am Law 100 firm or serving as in-house counsel at technology companies.

Is this a big deal?

AL has to say, none of the scores look amazing….

….but it’s good that the humans did best, although not by much. And poor old Claude for Word, with just 3.5 out of 10. Dario will not be happy about that.

Of course, this is just one experiment. And performance can change in many ways depending on a host of factors and differing parameters.

Perhaps the real takeaway here is that the humans were really not that much better than the AI tool. And the base models will only get better, so performance will improve.

Also, one could say that a contract AI company would naturally be very keen that Claude for Word was not great. That said, they stressed this was an independent study.

What Did We Learn?

AL asked the company what they believe we have learned from this test? And also what will Ivo do now with what it found? They said:

‘A huge question we deal with from in-house legal is ‘Why can’t we do this with Claude?’ or ‘How are you comparing to Claude’s Word Add-In?’

‘We found that generic AI is actually still miles away from achieving what legal AI can do in terms of legal judgment and contract review.

‘The next piece is bridging the gap between AI capabilities and the trust that lawyers have in legal AI outputs.

‘As we’ve worked with our customers on building out the product, we’ve established that playbooks are a great starting point for contract review, but lacks the judgment capabilities that a lawyer would have re: what to apply and what to be flexible on. With that, we’ve rebuilt the product to include the ability to reference from previously executed contracts and deal context, on top of playbooks.’

So, there you go.

—

Here’s some more data from the study.

The human attorney completed the 19 contract reviews in approximately 10 hours. Ivo completed the same work in less than three minutes on average, demonstrating how AI can reduce manual workload while maintaining quality output in structured contract workflows.

All three participants reviewed the same 19 contracts spanning NDAs, MSAs, DPAs, and other commercial agreement types. Judges scored outputs on five criteria — issue spotting, surgical redlining, formatting retention, judgment, and comments — on a scale of 1 to 10. The final scores represent the mean across all five judges. Participants were anonymized during the evaluation to ensure unbiased scoring and eliminate reviewer bias.

The playbook was provided as system configuration for Ivo and as a user prompt for Claude, reflecting real-world deployment conditions, including how legal teams configure and use these tools in practice rather than an abstract model-to-model comparison.

Key Findings

‘Purpose-built legal AI outperforms general AI.

Ivo outperformed Claude for Word across all evaluation criteria. The results underscore the advantage of domain-specific systems, where years of investment in legal logic, workflows, and formatting translate into more consistent, reliable outputs for legal teams.

The largest gap was in surgical redlining and legal judgment.

Ivo consistently selected stronger legal positions and demonstrated deeper contextual understanding in complex agreements, helping teams reduce review cycles and improve consistency across contracts, areas where general-purpose AI showed limitations.

Ivo delivers high-quality contract review at scale.

The near-identical scores between Ivo and the human reviewer suggest Ivo can consistently execute core contract review tasks at a high level, supporting legal teams by reducing manual workloads and improving efficiency.

Objective performance data in legal AI remains rare. This benchmark provides a transparent, real-world evaluation of how purpose-built systems and general AI tools perform on everyday legal work, giving teams a clearer basis for adoption decisions.’

More about Ivo here.

—

Conference Advertisement:

A Legal Tech Conference For All of Europe

Legal Innovators Europe – Paris – June 24 and 25.

Express route to your ticket here.

And,

Express route to your Legal Innovators California June 10th and 11th ticket here.

Legal Innovators California, the landmark West Coast legal tech event, will take place on June 10 and 11, in the heart of the Bay Area, the home to many of the world’s leading AI businesses – and plenty of legal tech pioneers as well! More information and tickets here.

Discover more from Artificial Lawyer

Subscribe to get the latest posts sent to your email.